June 10, 2026

May Updates: Smarter Deliverability Checks and a Big Launch in June

May may have been quieter than usual on the surface, but there was still plenty happening behind the scenes. This…

June 10, 2026

May Updates: Smarter Deliverability Checks and a Big Launch in June

May may have been quieter than usual on the surface, but there was still plenty happening behind the scenes. This…

May 29, 2026

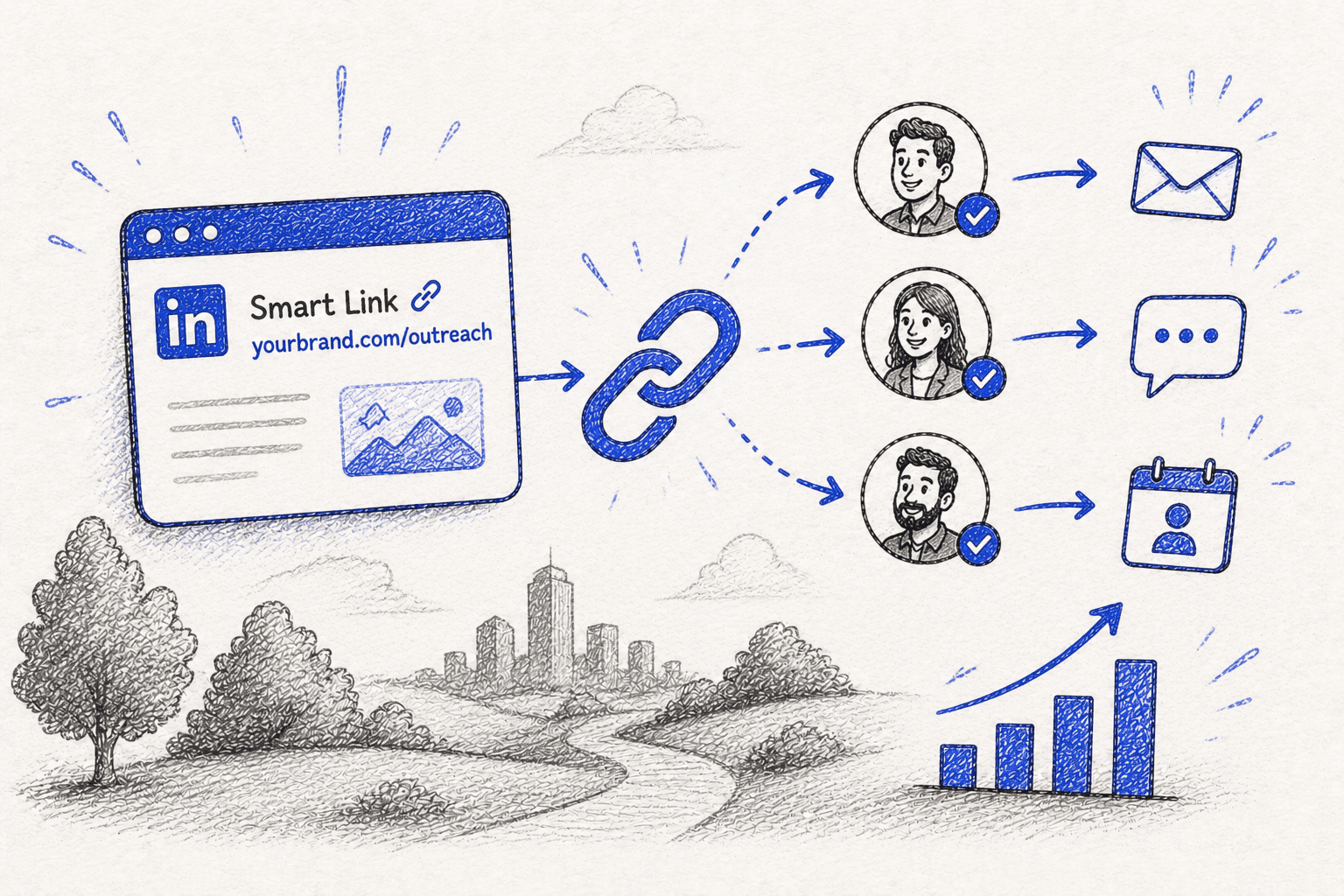

How to Use LinkedIn Smart Links to Boost Outreach [Practical Guide for 2026]

Learn how to create and use LinkedIn Sales Navigator Smart Links, why they’re valuable for lead generation, and how to combine them with tools like Snov.io to enhance outreach.

May 26, 2026

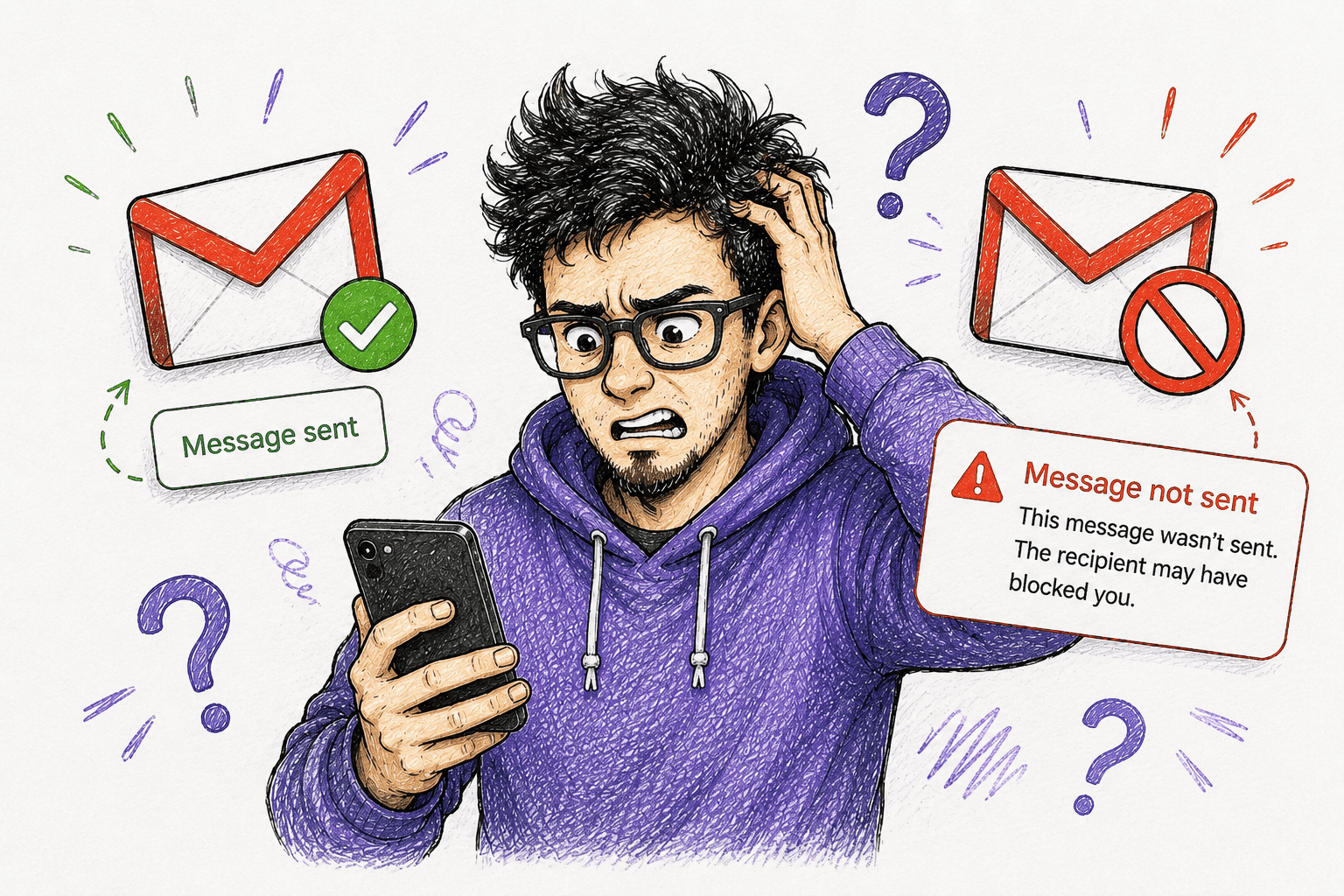

How to Know if Someone Blocked You on Gmail (I Tested All Tricks)

Wondering how to see if you are blocked on Gmail? Old hacks don’t work — I tested them all and share the actual ones, including expert recommendations for handling Gmail blocking.

May 19, 2026

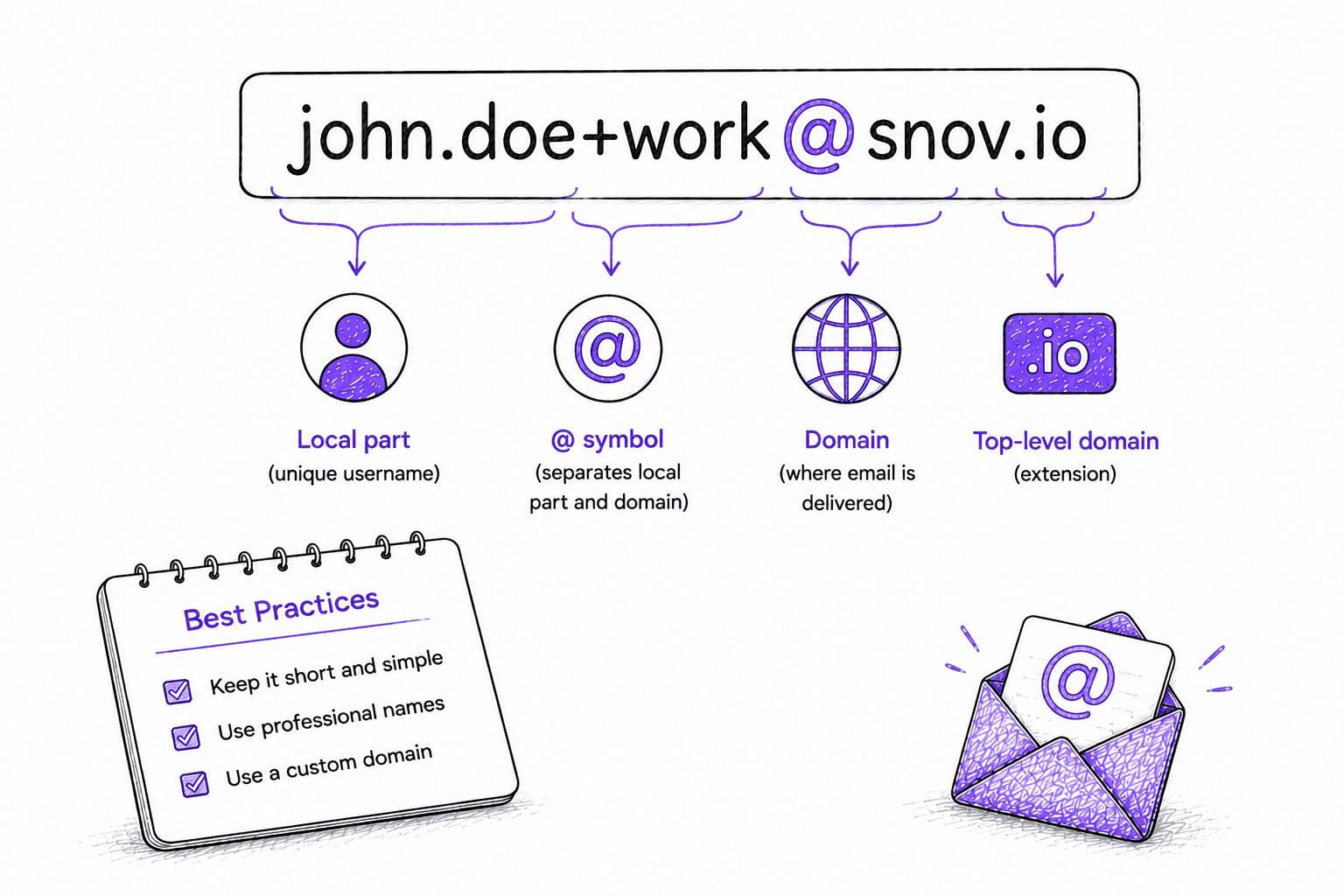

What Is Email Address Format? Rules, Components, and Best Practices for 2026

TL;DR The correct email address format follows the pattern local-part@domain.tld, where the local part identifies the specific mailbox and the…

Snov.io Academy

Learn how to boost your sales on LinkedIn with a secure automation tool

May 15, 2026

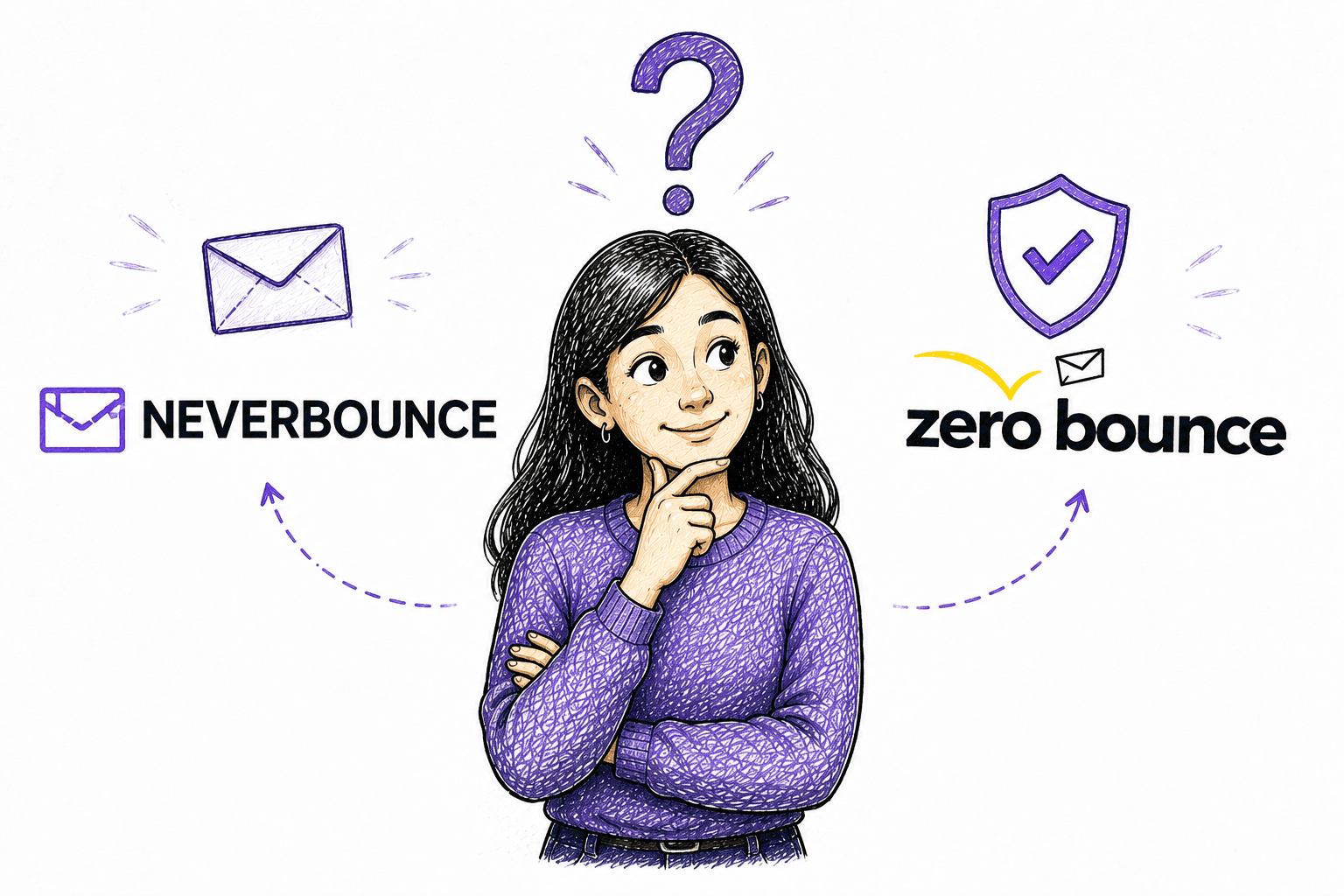

NeverBounce vs ZeroBounce Comparison: Which Would I Choose Based on My Experience?

Comparing NeverBounce vs ZeroBounce? I tested both and reviewed their features, pricing, bulk processing speed, and other aspects. Dive in to see the results.

May 13, 2026

How To Pick The Right Email Sequence Tool in 2026: My Experience And Guidelines

Learn how to pick the best solution among the top email sequence tools on the market. I tested the tools by myself, comparing their features, pricing, pros/cons, and user ratings.

May 7, 2026

How To Choose The Right Inbox Placement Tool for 2026: My Honest Review and Guidelines

Learn how to pick the most effective solution among the best inbox placement tools in 2026. I’ve made a deep analysis of top services so you can select the best fit to boost inbox rates.

May 6, 2026

How to choose the right B2B Data Provider for your team in 2026

Learn how to pick the right tool among the best B2B data providers for 2026. I reviewed 17 B2B contact database providers, comparing their top features, pricing, and user feedback.

May 5, 2026

April Showers Bring Sales Suite, Feature Requests, and More Snov.io Updates!

April at Snov.io was all about tightening the details that make your outreach actually work at scale. This month, we…

April 27, 2026

How To Build a Scalable Outreach Automation System in 2026 [Snov.io Playbook]

Explore a practical guide to outreach automation in 2026. Learn how to use automated workflows, AI agents, and deliverability tools to boost replies and booked meetings.